So I decided to deploy on your Windows Server 2016. Pogugliv on this subject, I realized that the interface there is not the most intuitive, and its functionality is inferior to a certain ESXi hosts. My second thought was XenServer, which I heard at a previous job.

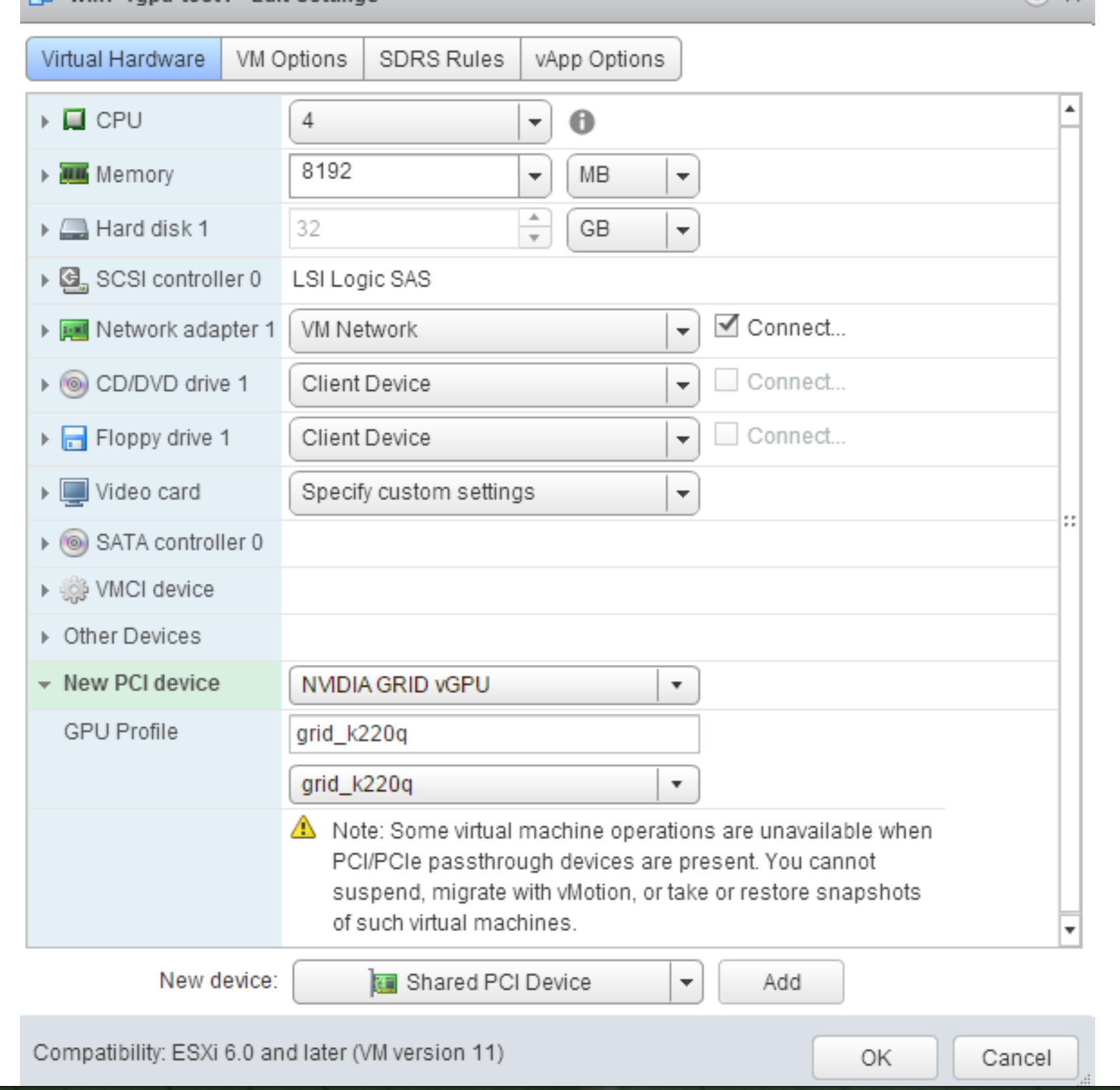

The server was deployed, the car is running, and all of a sudden I ran into a limit of 1Gb allocated to the client video memory, which is not enough for igruh. To deploy a few Hyper-V machines, or make use of RemoteFX, which seemed a good idea. The first thought that came to my mind - Windows Server. | 3 Tesla M60 On | 00000000:8C:00.Earlier never faced hypervisors as close to a maximum which is reached hand - RDP and VirtualBOX however, the desire to whip up your server /blackjack and gateways/ features iSCSI and PCI-passthrough(of course for gaming, and NAS on a thin client, although 1C server I also needed), prevailed over reason, and I started digging in that direction. | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. For more information, see NVIDIA System Management Interface nvidia-smi in the NVIDIA Virtual GPU Software Documentation. For more information, see How NVIDIA vGPU Software Licensing Is Enforced in the NVIDIA Virtual GPU Software Documentation.įor NVIDIA vGPUS, to get info on the physical GPU and vGPU, you can use the NVIDIA System Management Interface by entering the nvidia-smi command on the host. Set up NVIDIA vGPU guest software licensing for each vGPU and add the license credentials in the NVIDIA control panel. For more information, see the NVIDIA vGPU Software Graphics Driver in the NVIDIA Virtual GPU software documentation. The vGPU should appear under Display adapters. On Windows, you can alternatively open the Windows Device Manager. Run the virtual machine and connect to it using one of the supported remote desktop protocols, such as Mechdyne TGX, and verify that the vGPU is recognized by opening the NVIDIA Control Panel. Using the Administration portal or the VM portal to power off the virtual machine forces it to fully clean the memory.

Windows only: Powering off the virtual machine from within the Windows guest operating system sometimes sends the virtual machine into hibernate mode, which does not completely clear the memory, possibly leading to subsequent problems. Select On for Secondary display adapter for VNC to add a second emulated QXL or VGA graphics adapter as the primary graphics adapter for the console in addition to the vGPU. Select a vGPU type and the number of instances that you would like to use with this virtual machine. In the Administration Portal, click Compute → Virtual Machines.Ĭlick the name of the virtual machine to go to the details view.Ĭlick the Manage vGPU button. Loaded: loaded (/usr/lib/systemd/system/rvice enabled vendor preset: disabled)Īctive: active (running) since Fri 10:17:36 CET 5h 8min ago Vfio 32695 3 vfio_mdev,nvidia_vgpu_vfio,vfio_iommu_type1 Regenerate the initial ramdisk for the current kernel, then reboot:Īlternatively, if you need to use a prior supported kernel version with mediated devices, regenerate the initial ramdisk for all installed kernel versions:Ĭheck that the kernel loaded the nvidia_vgpu_vfio module:Ĭheck that the rvice service is running: Open /etc/modprobe.d/nf file in a text editor and add the following lines to the end of the file: If the NVIDIA software installer did not create the /etc/modprobe.d/nf file, create it manually. For information on getting the driver, see the Drivers page on the NVIDIA website. Download and install the NVIDIA driver for the host.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed